S111File

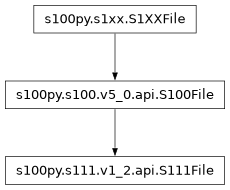

- class s100py.s111.v1_2.api.S111File(*args, **kywrds)

Bases:

S100File

Attributes Summary

Attributes attached to this object

Low-level HDF5 file driver used to open file

Return a File instance associated with this object

File name on disk

Low-level identifier appropriate for this object

low, high)

Meta block size (in bytes)

Python mode used to open file

Return the full name of this object.

Return the parent group of this object.

An (opaque) HDF5 reference to this object

Create a region reference (Datasets only).

Controls single-writer multiple-reader mode

User block size (in bytes)

Methods Summary

build_virtual_dataset(name, shape, dtype[, ...])Assemble a virtual dataset in this group.

clear()close()Close the file.

copy(source, dest[, name, shallow, ...])Copy an object or group.

create_dataset(name[, shape, dtype, data])Create a new HDF5 dataset

create_dataset_like(name, other, **kwupdate)Create a dataset similar to other.

create_group(name[, track_order, track_times])Create and return a new subgroup.

create_virtual_dataset(name, layout[, fillvalue])Create a new virtual dataset in this group.

flush()Tell the HDF5 library to flush its buffers.

get(name[, default, getclass, getlink])Retrieve an item or other information.

in_memory([file_image])Create an HDF5 file in memory, without an underlying file

items()Get a view object on member items

Create a 2-Band GeoTIFF for every speed and direction compound dataset

keys()Get a view object on member names

make_container_for_dcf(data_coding_format)move(source, dest)Move a link to a new location in the file.

pop(k[,d])If key is not found, d is returned if given, otherwise KeyError is raised.

popitem()as a 2-tuple; but raise KeyError if D is empty.

read()require_dataset(name, shape, dtype[, exact])Open a dataset, creating it if it doesn't exist.

require_group(name)Return a group, creating it if it doesn't exist.

setdefault(k[,d])show_keys(obj[, indent])to_geopackage([output_path])Create a geopackage file with a layer for each dataset in the HDF5 file.

to_geotiffs(output_directory[, creation_options])Creates a GeoTIFF file for each regularly gridded dataset in the HDF5 file.

Return a GDAL raster dataset with a band for each gridded dataset in the HDF5 file.

Create an osgeo.ogr vector datasource with a layer for each dataset in the HDF5 file.

update([E, ]**F)If E present and has a .keys() method, does: for k in E.keys(): D[k] = E[k] If E present and lacks .keys() method, does: for (k, v) in E: D[k] = v In either case, this is followed by: for k, v in F.items(): D[k] = v

values()Get a view object on member objects

visit(func)Recursively visit all names in this group and subgroups.

visit_links(func)Recursively visit all names in this group and subgroups.

visititems(func)Recursively visit names and objects in this group.

visititems_links(func)Recursively visit links in this group.

write()Attributes Documentation

- PRODUCT_SPECIFICATION = 'INT.IHO.S-111.1.2'

- attrs

Attributes attached to this object

- driver

Low-level HDF5 file driver used to open file

- epsg

- file

Return a File instance associated with this object

- filename

File name on disk

- id

Low-level identifier appropriate for this object

- libver

low, high)

- Type:

File format version bounds (2-tuple

- meta_block_size

Meta block size (in bytes)

- mode

Python mode used to open file

- name

Return the full name of this object. None if anonymous.

- parent

Return the parent group of this object.

This is always equivalent to obj.file[posixpath.dirname(obj.name)]. ValueError if this object is anonymous.

- ref

An (opaque) HDF5 reference to this object

- regionref

Create a region reference (Datasets only).

The syntax is regionref[<slices>]. For example, dset.regionref[…] creates a region reference in which the whole dataset is selected.

Can also be used to determine the shape of the referenced dataset (via .shape property), or the shape of the selection (via the .selection property).

- swmr_mode

Controls single-writer multiple-reader mode

- userblock_size

User block size (in bytes)

Methods Documentation

- build_virtual_dataset(name, shape, dtype, maxshape=None, fillvalue=None)

Assemble a virtual dataset in this group.

This is used as a context manager:

with f.build_virtual_dataset('virt', (10, 1000), np.uint32) as layout: layout[0] = h5py.VirtualSource('foo.h5', 'data', (1000,))

- name

(str) Name of the new dataset

- shape

(tuple) Shape of the dataset

- dtype

A numpy dtype for data read from the virtual dataset

- maxshape

(tuple, optional) Maximum dimensions if the dataset can grow. Use None for unlimited dimensions.

- fillvalue

The value used where no data is available.

- clear() None. Remove all items from D.

- close()

Close the file. All open objects become invalid

- copy(source, dest, name=None, shallow=False, expand_soft=False, expand_external=False, expand_refs=False, without_attrs=False)

Copy an object or group.

The source can be a path, Group, Dataset, or Datatype object. The destination can be either a path or a Group object. The source and destinations need not be in the same file.

If the source is a Group object, all objects contained in that group will be copied recursively.

When the destination is a Group object, by default the target will be created in that group with its current name (basename of obj.name). You can override that by setting “name” to a string.

There are various options which all default to “False”:

shallow: copy only immediate members of a group.

expand_soft: expand soft links into new objects.

expand_external: expand external links into new objects.

expand_refs: copy objects that are pointed to by references.

without_attrs: copy object without copying attributes.

Example:

>>> f = File('myfile.hdf5', 'w') >>> f.create_group("MyGroup") >>> list(f.keys()) ['MyGroup'] >>> f.copy('MyGroup', 'MyCopy') >>> list(f.keys()) ['MyGroup', 'MyCopy']

- create_dataset(name, shape=None, dtype=None, data=None, **kwds)

Create a new HDF5 dataset

- name

Name of the dataset (absolute or relative). Provide None to make an anonymous dataset.

- shape

Dataset shape. Use “()” for scalar datasets. Required if “data” isn’t provided.

- dtype

Numpy dtype or string. If omitted, dtype(‘f’) will be used. Required if “data” isn’t provided; otherwise, overrides data array’s dtype.

- data

Provide data to initialize the dataset. If used, you can omit shape and dtype arguments.

Keyword-only arguments:

- chunks

(Tuple or int) Chunk shape, or True to enable auto-chunking. Integers can be used for 1D shape.

- maxshape

(Tuple or int) Make the dataset resizable up to this shape. Use None for axes within the tuple you want to be unlimited. Integers can be used for 1D shape. For 1D datasets with unlimited maxshape, a shape tuple of length 1 must be provided,

(None,). PassingNonesets ``maxshape` to shape, making the dataset un-resizable, which is the default.- compression

(String or int) Compression strategy. Legal values are ‘gzip’, ‘szip’, ‘lzf’. If an integer in range(10), this indicates gzip compression level. Otherwise, an integer indicates the number of a dynamically loaded compression filter.

- compression_opts

Compression settings. This is an integer for gzip, 2-tuple for szip, etc. If specifying a dynamically loaded compression filter number, this must be a tuple of values.

- scaleoffset

(Integer) Enable scale/offset filter for (usually) lossy compression of integer or floating-point data. For integer data, the value of scaleoffset is the number of bits to retain (pass 0 to let HDF5 determine the minimum number of bits necessary for lossless compression). For floating point data, scaleoffset is the number of digits after the decimal place to retain; stored values thus have absolute error less than 0.5*10**(-scaleoffset).

- shuffle

(T/F) Enable shuffle filter.

- fletcher32

(T/F) Enable fletcher32 error detection. Not permitted in conjunction with the scale/offset filter.

- fillvalue

(Scalar) Use this value for uninitialized parts of the dataset.

- track_times

(T/F) Enable dataset creation timestamps.

- track_order

(T/F) Track attribute creation order if True. If omitted use global default h5.get_config().track_order.

- external

(Iterable of tuples) Sets the external storage property, thus designating that the dataset will be stored in one or more non-HDF5 files external to the HDF5 file. Adds each tuple of (name, offset, size) to the dataset’s list of external files. Each name must be a str, bytes, or os.PathLike; each offset and size, an integer. If only a name is given instead of an iterable of tuples, it is equivalent to [(name, 0, h5py.h5f.UNLIMITED)].

- efile_prefix

(String) External dataset file prefix for dataset access property list. Does not persist in the file.

- virtual_prefix

(String) Virtual dataset file prefix for dataset access property list. Does not persist in the file.

- allow_unknown_filter

(T/F) Do not check that the requested filter is available for use. This should only be used with

write_direct_chunk, where the caller compresses the data before handing it to h5py.- rdcc_nbytes

Total size of the dataset’s chunk cache in bytes. The default size is 1024**2 (1 MiB) for HDF5 before 2.0 and 8 MiB for HDF5 2.0 or later.

- rdcc_w0

The chunk preemption policy for this dataset. This must be between 0 and 1 inclusive and indicates the weighting according to which chunks which have been fully read or written are penalized when determining which chunks to flush from cache. A value of 0 means fully read or written chunks are treated no differently than other chunks (the preemption is strictly LRU) while a value of 1 means fully read or written chunks are always preempted before other chunks. If your application only reads or writes data once, this can be safely set to 1. Otherwise, this should be set lower depending on how often you re-read or re-write the same data. The default value is 0.75.

- rdcc_nslots

The number of chunk slots in the dataset’s chunk cache. Increasing this value reduces the number of cache collisions, but slightly increases the memory used. Due to the hashing strategy, this value should ideally be a prime number. As a rule of thumb, this value should be at least 10 times the number of chunks that can fit in rdcc_nbytes bytes. For maximum performance, this value should be set approximately 100 times that number of chunks. The default value is 521.

- create_dataset_like(name, other, **kwupdate)

Create a dataset similar to other.

- name

Name of the dataset (absolute or relative). Provide None to make an anonymous dataset.

- other

The dataset which the new dataset should mimic. All properties, such as shape, dtype, chunking, … will be taken from it, but no data or attributes are being copied.

Any dataset keywords (see create_dataset) may be provided, including shape and dtype, in which case the provided values take precedence over those from other.

- create_empty_metadata()

- create_group(name, track_order=None, *, track_times=False)

Create and return a new subgroup.

Name may be absolute or relative. Fails if the target name already exists.

- track_order

Track dataset/group/attribute creation order under this group if True. If None use global default h5.get_config().track_order.

- track_times: bool or None, default: False

If True, store timestamps for this group in the file. If None, fall back to the default value.

- create_virtual_dataset(name, layout, fillvalue=None)

Create a new virtual dataset in this group.

See virtual datasets in the docs for more information.

- name

(str) Name of the new dataset

- layout

(VirtualLayout) Defines the sources for the virtual dataset

- fillvalue

The value to use where there is no data.

- flush()

Tell the HDF5 library to flush its buffers.

- get(name, default=None, getclass=False, getlink=False)

Retrieve an item or other information.

- “name” given only:

Return the item, or “default” if it doesn’t exist

- “getclass” is True:

Return the class of object (Group, Dataset, etc.), or “default” if nothing with that name exists

- “getlink” is True:

Return HardLink, SoftLink or ExternalLink instances. Return “default” if nothing with that name exists.

- “getlink” and “getclass” are True:

Return HardLink, SoftLink and ExternalLink classes. Return “default” if nothing with that name exists.

Example:

>>> cls = group.get('foo', getclass=True) >>> if cls == SoftLink:

- classmethod in_memory(file_image=None, **kwargs)

Create an HDF5 file in memory, without an underlying file

- file_image

The initial file contents as bytes (or anything that supports the Python buffer interface). HDF5 takes a copy of this data.

- block_size

Chunk size for new memory alloactions (default 64 KiB).

Other keyword arguments are like File(), although name, mode, driver and locking can’t be passed.

- items()

Get a view object on member items

- iter_groups()

- Create a 2-Band GeoTIFF for every speed and direction compound dataset

within each HDF5 file(s).

- Args:

- input_path: Path to a single S-111 HDF5 file or a directory containing

one or more.

- output_path: Path to a directory where GeoTIFF file(s) will be

generated.

- keys()

Get a view object on member names

- static make_container_for_dcf(data_coding_format)

- move(source, dest)

Move a link to a new location in the file.

If “source” is a hard link, this effectively renames the object. If “source” is a soft or external link, the link itself is moved, with its value unmodified.

- pop(k[, d]) v, remove specified key and return the corresponding value.

If key is not found, d is returned if given, otherwise KeyError is raised.

- popitem() (k, v), remove and return some (key, value) pair

as a 2-tuple; but raise KeyError if D is empty.

- read()

- require_dataset(name, shape, dtype, exact=False, **kwds)

Open a dataset, creating it if it doesn’t exist.

If keyword “exact” is False (default), an existing dataset must have the same shape and a conversion-compatible dtype to be returned. If True, the shape and dtype must match exactly.

If keyword “maxshape” is given, the maxshape and dtype must match instead.

If any of the keywords “rdcc_nslots”, “rdcc_nbytes”, or “rdcc_w0” are given, they will be used to configure the dataset’s chunk cache.

Other dataset keywords (see create_dataset) may be provided, but are only used if a new dataset is to be created.

Raises TypeError if an incompatible object already exists, or if the shape, maxshape or dtype don’t match according to the above rules.

- require_group(name)

Return a group, creating it if it doesn’t exist.

TypeError is raised if something with that name already exists that isn’t a group.

- setdefault(k[, d]) D.get(k,d), also set D[k]=d if k not in D

- show_keys(obj, indent=0)

- to_geopackage(output_path=None)

Create a geopackage file with a layer for each dataset in the HDF5 file. Based on the to_vector_dataset method, so only supports ungeorectified gridded data (DCF=3).

- Parameters:

output_path ((

str,Path)) – Full path of the geopackage file, if None then the same name as the HDF5 file will be used with a .gpkg extension- Return type:

None

- to_geotiffs(output_directory, creation_options=None)

Creates a GeoTIFF file for each regularly gridded dataset in the HDF5 file. If only one dataset is found, a single GeoTIFF file will be created named the same as the .h5 but with a .tif extension. If multiple datasets are found, multiple GeoTIFF files will be created named the same as the .h5 but with a _{timepoint}.tif extension. Supports DCF 2 and 9.

- Parameters:

output_directory ((

str,Path)) – Directory of the location to save the GeoTIFF file(s)creation_options (

list) – List of GDAL creation options

- Returns:

List of filenames created

- Return type:

list

- to_raster_datasets()

Return a GDAL raster dataset with a band for each gridded dataset in the HDF5 file. The returned dataset will use the upper left corner and have a negative dyy value in the geotransform like the TIF convention.

- Returns:

(dataset, group_instance) – a GDAL raster and a h5py group instance which could be queried for additional attributes the raster was created from

- Return type:

tuple

- to_vector_dataset()

Create an osgeo.ogr vector datasource with a layer for each dataset in the HDF5 file. Currently only supports ‘Ungeorectified gridded arrays’ (DCF=3).

- Return type:

osgeo.ogr.DataSource

- update([E, ]**F) None. Update D from mapping/iterable E and F.

If E present and has a .keys() method, does: for k in E.keys(): D[k] = E[k] If E present and lacks .keys() method, does: for (k, v) in E: D[k] = v In either case, this is followed by: for k, v in F.items(): D[k] = v

- values()

Get a view object on member objects

- visit(func)

Recursively visit all names in this group and subgroups.

Note: visit ignores soft and external links. To visit those, use visit_links.

You supply a callable (function, method or callable object); it will be called exactly once for each link in this group and every group below it. Your callable must conform to the signature:

func(<member name>) => <None or return value>

Returning None continues iteration, returning anything else stops and immediately returns that value from the visit method. The iteration order is lexicographic.

Example:

>>> # List the entire contents of the file >>> f = File("foo.hdf5") >>> list_of_names = [] >>> f.visit(list_of_names.append)

- visit_links(func)

Recursively visit all names in this group and subgroups. Each link will be visited exactly once, regardless of its target.

You supply a callable (function, method or callable object); it will be called exactly once for each link in this group and every group below it. Your callable must conform to the signature:

func(<member name>) => <None or return value>

Returning None continues iteration, returning anything else stops and immediately returns that value from the visit method. The iteration order is lexicographic.

Example:

>>> # List the entire contents of the file >>> f = File("foo.hdf5") >>> list_of_names = [] >>> f.visit_links(list_of_names.append)

- visititems(func)

Recursively visit names and objects in this group.

Note: visititems ignores soft and external links. To visit those, use visititems_links.

You supply a callable (function, method or callable object); it will be called exactly once for each link in this group and every group below it. Your callable must conform to the signature:

func(<member name>, <object>) => <None or return value>

Returning None continues iteration, returning anything else stops and immediately returns that value from the visit method. The iteration order is lexicographic.

Example:

# Get a list of all datasets in the file >>> mylist = [] >>> def func(name, obj): … if isinstance(obj, Dataset): … mylist.append(name) … >>> f = File(‘foo.hdf5’) >>> f.visititems(func)

- visititems_links(func)

Recursively visit links in this group. Each link will be visited exactly once, regardless of its target.

You supply a callable (function, method or callable object); it will be called exactly once for each link in this group and every group below it. Your callable must conform to the signature:

func(<member name>, <link>) => <None or return value>

Returning None continues iteration, returning anything else stops and immediately returns that value from the visit method. The iteration order is lexicographic.

Example:

# Get a list of all softlinks in the file >>> mylist = [] >>> def func(name, link): … if isinstance(link, SoftLink): … mylist.append(name) … >>> f = File(‘foo.hdf5’) >>> f.visititems_links(func)

- write()

- __init__(*args, **kywrds)

Create a new file object.

See the h5py user guide for a detailed explanation of the options.

- name

Name of the file on disk, or file-like object. Note: for files created with the ‘core’ driver, HDF5 still requires this be non-empty.

- mode

r Readonly, file must exist (default) r+ Read/write, file must exist w Create file, truncate if exists w- or x Create file, fail if exists a Read/write if exists, create otherwise

- driver

Name of the driver to use. Legal values are None (default, recommended), ‘core’, ‘sec2’, ‘direct’, ‘stdio’, ‘mpio’, ‘ros3’.

- libver

Library version bounds. Supported values: ‘earliest’, ‘v108’, ‘v110’, ‘v112’, ‘v114’, ‘v200’ and ‘latest’ depending on the version of libhdf5 h5py is built against.

- userblock_size

Desired size of user block. Only allowed when creating a new file (mode w, w- or x).

- swmr

Open the file in SWMR read mode. Only used when mode = ‘r’.

- rdcc_nslots

The number of chunk slots in the raw data chunk cache for this file. Increasing this value reduces the number of cache collisions, but slightly increases the memory used. Due to the hashing strategy, this value should ideally be a prime number. As a rule of thumb, this value should be at least 10 times the number of chunks that can fit in rdcc_nbytes bytes. For maximum performance, this value should be set approximately 100 times that number of chunks. The default value is 521. Applies to all datasets unless individually changed.

- rdcc_nbytes

Total size of the dataset chunk cache in bytes. The default size per dataset is 1024**2 (1 MiB) for HDF5 before 2.0 and 8 MiB for HDF5 2.0 and later. Applies to all datasets unless individually changed.

- rdcc_w0

The chunk preemption policy for all datasets. This must be between 0 and 1 inclusive and indicates the weighting according to which chunks which have been fully read or written are penalized when determining which chunks to flush from cache. A value of 0 means fully read or written chunks are treated no differently than other chunks (the preemption is strictly LRU) while a value of 1 means fully read or written chunks are always preempted before other chunks. If your application only reads or writes data once, this can be safely set to 1. Otherwise, this should be set lower depending on how often you re-read or re-write the same data. The default value is 0.75. Applies to all datasets unless individually changed.

- track_order

Track dataset/group/attribute creation order under root group if True. If None use global default h5.get_config().track_order.

- track_times: bool or None, default: False

If True, store timestamps for this group in the file. If None, fall back to the default value.

- fs_strategy

The file space handling strategy to be used. Only allowed when creating a new file (mode w, w- or x). Defined as: “fsm” FSM, Aggregators, VFD “page” Paged FSM, VFD “aggregate” Aggregators, VFD “none” VFD If None use HDF5 defaults.

- fs_page_size

File space page size in bytes. Only used when fs_strategy=”page”. If None use the HDF5 default (4096 bytes).

- fs_persist

A boolean value to indicate whether free space should be persistent or not. Only allowed when creating a new file. The default value is False.

- fs_threshold

The smallest free-space section size that the free space manager will track. Only allowed when creating a new file. The default value is 1.

- page_buf_size

Page buffer size in bytes. Only allowed for HDF5 files created with fs_strategy=”page”. Must be a power of two value and greater or equal than the file space page size when creating the file. It is not used by default.

- min_meta_keep

Minimum percentage of metadata to keep in the page buffer before allowing pages containing metadata to be evicted. Applicable only if page_buf_size is set. Default value is zero.

- min_raw_keep

Minimum percentage of raw data to keep in the page buffer before allowing pages containing raw data to be evicted. Applicable only if page_buf_size is set. Default value is zero.

- locking

The file locking behavior. Defined as:

False (or “false”) – Disable file locking

True (or “true”) – Enable file locking

“best-effort” – Enable file locking but ignore some errors

None – Use HDF5 defaults

Warning

The HDF5_USE_FILE_LOCKING environment variable can override this parameter.

- alignment_threshold

Together with

alignment_interval, this property ensures that any file object greater than or equal in size to the alignment threshold (in bytes) will be aligned on an address which is a multiple of alignment interval.- alignment_interval

This property should be used in conjunction with

alignment_threshold. See the description above. For more details, see https://support.hdfgroup.org/documentation/hdf5/latest/group___f_a_p_l.html#gab99d5af749aeb3896fd9e3ceb273677a- meta_block_size

Set the current minimum size, in bytes, of new metadata block allocations. See https://support.hdfgroup.org/documentation/hdf5/latest/group___f_a_p_l.html#ga8822e3dedc8e1414f20871a87d533cb1

- Additional keywords

Passed on to the selected file driver.